Hardware 101 - Latches, Memories and why Off chip sucks

01 Jan 2014Because I am about to embark on a discussion of microchip design and examination, it is critical that we first agree on the fundamentals of microchip design and appreciate the consequent design constraints enforced upon hardware designers by manufacturing limitations and the laws of physics.

Latches

First, lets rediscover a latch. (Latches on Wikipedia). Latches are fundamental to computer design and construction because they are the smallest unit of storage which we can describe and build in hardware. From here on out, everything we do will be based on connecting latches together and making assumptions about how long it takes from values to get from one latch to the next.

Operations on latched data

Some operations on these integer quantities are expensive in terms of transistors, latches and time, others are cheap. Addition between two numbers for instance is considered essentially free. The bitwise operations, xor, or, and nand and the shifts are also so inexpensive as to be negligible. Multiplication is more expensive, with the crown going to modulo and division which are slow and painful to compute on integers.

Enough about transistors and bits, lets think about chips for a minute. Within a processor, there exists at least one clock. A clock is a simple circuit which emits a clock signal at a known interval. This clock signal is used to time the operations of the chip, especially the locking of latches.

From latches to register

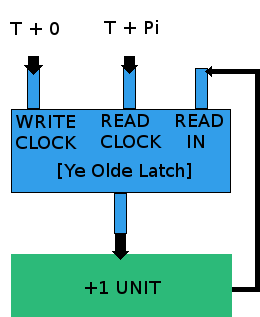

Lets say we have a processor which adds one every cycle. How does it store this information between one cycle and the next? In a latch! (okay fine really a register, being a fancy name for an array of latches). So what do we need to build this processor? We need a single register with N input wires, a “set” wire and a “reset” wire, where the set and reset lines drive the individual latch set and reset inputs. We need a simple add one circuit, and we need a pair of clocks, set to tick at T and T+Pi, that is they tick at the same frequency, but their ticks are half a cycle apart in time.

Wire the T+0 clock to the reset line, the register’s outputs to the inputs of the adder, the outputs of the adder to the inputs of the register, and the T+Pi clock to the “set” input of the register.

In this simple circuit, the latches will continually emit the value last written to them. We will assume that this value is magically zero when power is first applied. The adder gets this value, adds one, and its output wires now have the binary representation of 0+1, or 1. Assuming that the adder runs in the time Pi which it takes for the second clock to tick, the second clock will tick and set the register with the value 1. In the next cycle we will add to get 2 and latch it in, then add to get 3 and latch it in and so forth.

Now what if we wanted to count up by one faster than this circuit does? Well how fast does this circuit count up by one? The circuit which I’ve defined only deals with time abstractly. What bounds T? T is bound by the amount of time which it takes to add one in binary, more some small constants due to the charge latencies of the various wires involved. That is, our circuit can only behave correctly if it reaches the next “steady state” by the time that the clock ticks thus our clock can tick no faster than our circuit reaches the next steady state.

What does this mean for a processor? It means that a processor or microchip of some size can only “tick” (perform one clock cycle) so fast as it can reach the next steady state. Electrical current flows at the speed of light, and the size of a chip is known. Therefor we can place an upper bound on cycle time for a chip of known size! The farthest a current will reasonably have to travel across a circuit is twice the edge length, and the time it will take to do so is that distance over C. So we can build a table of circuit sizes to frequency lower bounds! Smaller is better, and we’ve just reinvented More’s law of sorts! We’ve also invented overclocking: running a processor faster than it was designed to by increasing current and voltage at the expense of heat generation…

DRAM

Now we need a way to store data for longer than the single clock cycle which latches let us achieve as above. We also need to store gigabytes and gigabytes of memory on a modern processor. Latches consume a lot of transistors and consequently chip area. This means that without increasing the size of our chips (and consequently hurting our clock speed!) we can’t fit much storage on a single chip. So what if we built separate chips with nothing but a whole lot of latches for storage? This is roughly DRAM. (DRAM on Wikipedia).

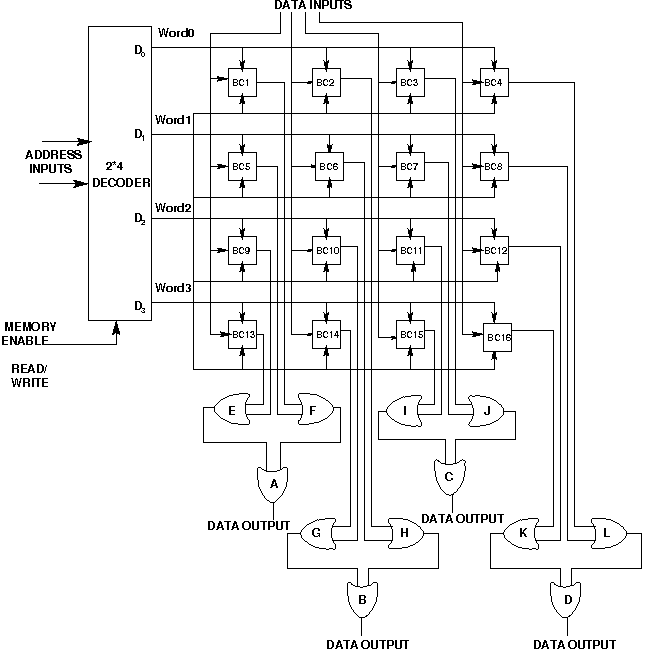

Think of DRAM as a grid of width N and height M, from which we can “read” a block of K bits with one operation. To read from DRAM we simply power the row (range [0,M], we’re going in height here) and select a “line” out of the grid using our addressing bits. This charges the line of latches, which yield their value and gives us a bit vector of values which we can interpret however we wish.

On a modern-ish 32 bit machine, this bit vector is an array of 1024 words. A word is an array of 4 bytes, each of which is 8 bits. On a 64 bit machine, a word is an array of 8 bytes. A word is treated as constituting a single integer, but the order of bytes in the word may vary, see Big Endian and Little Endian.

Why memory is evil

We’ve gotten a handle on how fast the processor itself can run, but how about the gigabytes of DRAM which we hope to attach to it one day? Well, now we have to look at how long it will take a signal to propagate going off the chip. Intuitively, our main memory read latency is going to be at least the physical distance from the processor to the DRAM unit, the depth of circuitry required to select a line from DRAM, and the distance back to the processor, and all that over C. Because processors are small in comparison to on motherboard distances, it is easy to see how main memory access latencies can in the worst case quickly become thousands of times slower than performing on chip operations.

Okay. Well, that’s quite a helping of hardware. I think that’s also everything about hardware you’ll really need to understand the processor designs and the decisions behind which I’m about to present so without further ado, lets build a stack machine!

^d